Light in the darkness: New Corelight Encrypted Traffic Collection

Version 18 of our software features the Encrypted Traffic Collection which focuses on SSH, SSL/TLS certificates and insights into encrypted network...

Recently I heard that a company interested in Corelight was considering delaying their evaluation because of questions about SIEM technology. They currently have two SIEMs and are evaluating a third, possibly to replace the first two. They believed that they needed better clarity about SIEMs as a platform to store, query, and present Corelight data generated by the Zeek network security monitor.

While I have used log collection and SIEM platforms to review Zeek transaction logs, it is not necessary to wait for a SIEM before collecting Corelight data. In fact, I recommend collecting Corelight data as quickly as possible, regardless of any decisions regarding log collection or SIEMs. The sooner you start gathering Corelight data, the sooner you have evidence of normal, suspicious, and malicious activity in your environment. This post will discuss how Corelight makes it easy to generate and export Zeek logs, and why starting as early as possible is the best course of action.

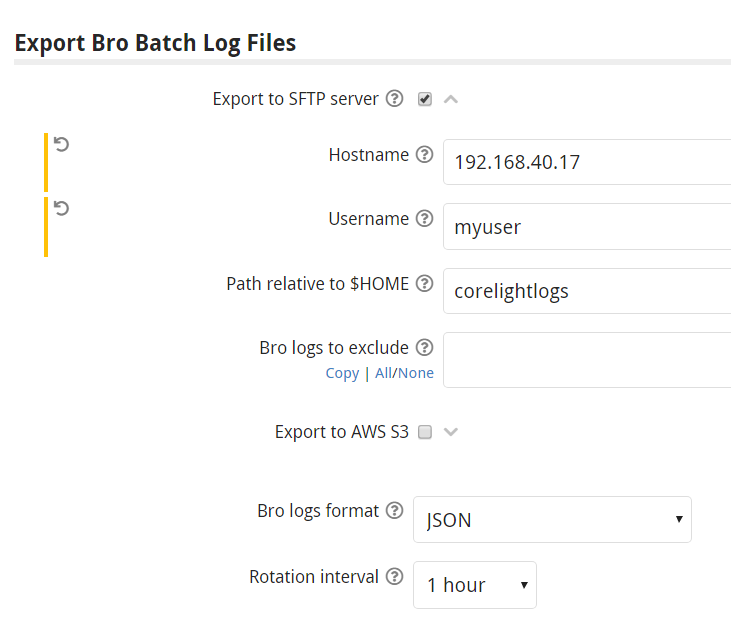

Corelight Sensors make it easy to begin generating and storing Zeek logs. In my lab, I send logs to a Linux server running Secure File Transfer Protocol (SFTP), which is usually enabled by default on servers running Secure Shell (SSH). After creating a user and a directory to store the logs, I copy the new user’s public SSH key to the Corelight sensor via the Web-based user interface. Finally I configure where and how I want the logs to be exported, as shown in figure 1 below:

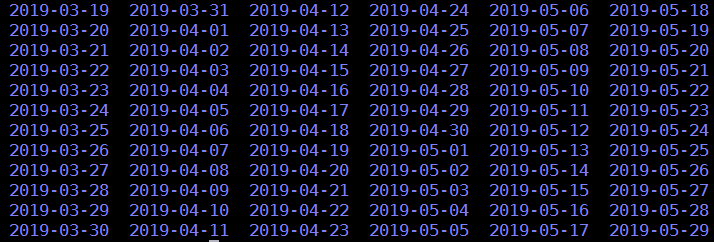

This configuration copies gzip-archived Corelight logs in JSON format to 192.168.40.17 every hour. They end up in a directory on the SFTP server as shown in figure 2:

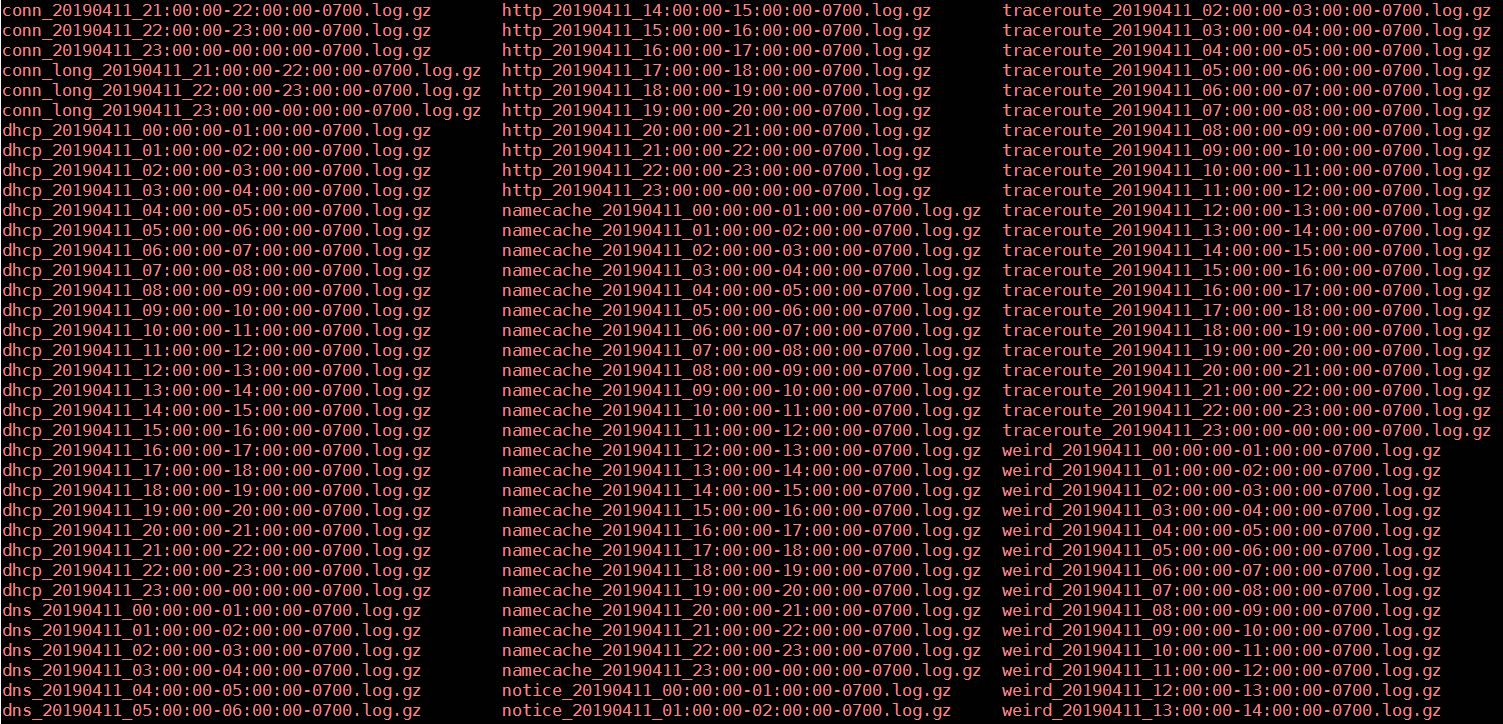

As seen in figure 3, they are gzip-encoded and saved in one-hour increments:

If I wish to query these logs in this format, I can use standard Unix commands. In the following example I check all logs for any entries containing the IP address 220.181.57.216.

$ zgrep 220.181.57.216 *

dns_20190411_07:00:00-08:00:00-0700.log.gz:{“ts”:”2019-04-11T14:19:18.582708Z”,”uid”:”C58wTV3HxJo4DpC2Gh”,”id.orig_h”:”192.168.40.118″,”id.orig_p”:60899,

”id.resp_h”:”192.168.40.18″,”id.resp_p”:53,”proto”:”udp”,”trans_id”:63429,”query”:”baidu.com”,

”qclass”:1,”qclass_name”:”C_INTERNET”,”qtype”:1,”qtype_name”:”A”,”rcode”:0,”rcode_name”:”NOERROR”,

”AA”:false,”TC”:false,”RD”:true,”RA”:true,”Z”:0,”answers”:[“123.125.114.144″,”220.181.57.216″],”TTLs”:

[288.0,288.0],”rejected”:false}

My previous blog posts often feature Corelight logs queried using command line tools, so please check them out for other examples.

You may have seen in figure 1 that I could have exported these same files to Amazon’s S3 storage system. That is a great option for anyone not wishing to store data on a server, network attached storage device, or other on-premise solution.

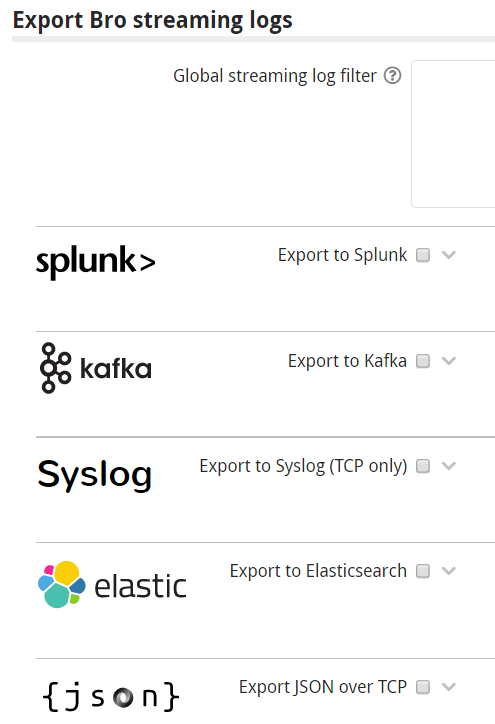

Corelight also supports exporting logs via streaming to up to five simultaneous destinations, as shown in figure 4:

What happens when an incident response team deploys a SIEM, now that they have a repository of Corelight files? If they have logs stored in the manner described here, they can point the SIEM at the directories containing Corelight data. The SIEM will process them and make them available for analysis, whether as part of a bake-off or final product deployment.

There are several benefits of this approach. First, stored Corelight data can be used to evaluate several SIEMs. Because the original Corelight data was saved to disk (i.e., not streamed), analysts can be sure each SIEM gets the exact same data. None was lost or corrupted in transit. Queries against the SIEM should produce the same results as queries against Corelight data saved to disk.

Second, analysts gain exposure to all of the fields available in Corelight data, prior to seeing it rendered in a SIEM. Analysts may discover the SIEM is omitting, changing, or otherwise obscuring information that they need for investigating security events.

Third, and most importantly from an incident detection and response perspective, analysts have not missed generating and storing days, weeks, or potentially months of valuable network security monitoring data. Intruders do not pause their activities, waiting for defenders to have their SIEM deployed! Rather, intruders take advantage of gaps in visibility and security processes to gain access, establish footholds, obscure command and control, and accomplish their mission.

The bottom line is that the sooner an organization begins collecting NSM logs, the better. This is true even if no one is immediately responsible for analyzing the data. Imagine an extreme situation where an organization collects NSM data, but has no one reviewing it. Suddenly, the organization learns that it may be the victim of a compromise or breach. Would that organization prefer to start collecting NSM data after realizing it’s a victim, or would it prefer to have days, weeks, or months of NSM data waiting for analysis?

As a former incident response consultant who dropped into compromised companies on a regular basis, it’s obvious to me that I want as much evidence ready for analysis as possible. NSM data helps me establish the scope and nature of the intrusion, which allows me to more rapidly and accurately recommend containment and mitigation efforts. Without that evidence, I have to start collecting on the first day of the engagement, and the adversary likely already knows I’m there because they have been monitoring the victim’s email.

Although this post frames the discussion in terms of pre- and post-SIEM deployments, there’s another benefit to mention before closing. By archiving a gzip-encoded version of your logs, in addition to whatever data you send to your SIEM, you are maintaining a repository of evidence that can be shared with other teams. That data can be anonymized, manipulated, pared, enriched, or otherwise transformed before sharing. Gzip-encoded, json-formatted Zeek logs give security teams maximum flexibility, whether used internally or externally.

Corelight makes it incredibly easy to generate and export NSM transaction logs. This data can save the day when you suspect your organization has been compromised. Don’t wait for a SIEM. Start collecting NSM data today!

Version 18 of our software features the Encrypted Traffic Collection which focuses on SSH, SSL/TLS certificates and insights into encrypted network...

Corelight Entity Collection, now available in v26 software release, features 3 new packages: Known Entities, Application Identification and Local...

We're excited to announce the launch of our ICS/OT Collection to help extend foundational visibility.